|

1/13/2024 0 Comments Redshift wlm

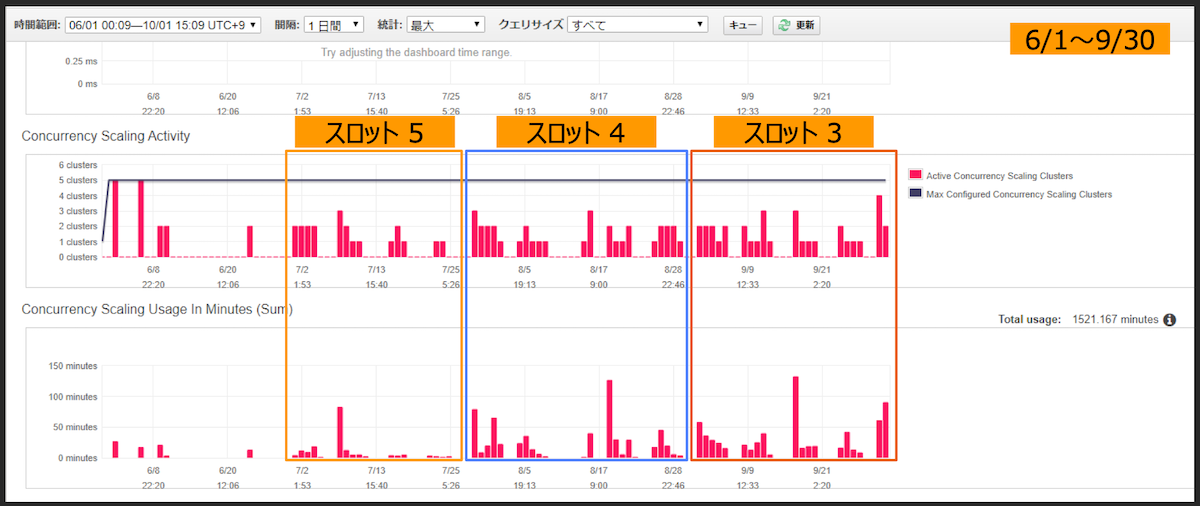

By separating these workloads, you ensure that they don’t block each other. The WLM console allows you to set up different query queues, and then assign a specific group of queries to each queue.įor example, you can assign data loads to one queue, and your ad-hoc queries to another. To better understand this, let’s first discuss how Amazon Redshift processes queries before looking closer at how Looker generates workloads.Īmazon Redshift workload management and query queuesĪmazon Redshift operates in a queuing model, and offers a key feature in the form of the. The key to solving data bottlenecks lies in balancing your Looker workloads with your Redshift setup. As the data-hungry workforce grows, however, more and more people request to be set up with dashboards and data access, which creates a new challenge - a data bottleneck. It often starts with an identified data need, followed by somebody spinning up an Amazon Redshift cluster, building a few data pipelines, and then connecting an analytics platform, like Looker, to visualize the results. In this piece, we’ll explore how to fine-tune your Redshift cluster so you can better match your Looker workloads to your Amazon Redshift configuration and render dashboards quickly and efficiently.įor many organizations, standing up an analytics stack can initially be a bit of an experiment. However, given Redshift’s architecture, having an increased number of tiles in a dashboard can result in slower response times and queries that take longer to complete than they initially did. Many Looker users find that Redshift is an excellent, high performance database that can be used to power sophisticated dashboards. The content is subject to limited support. The default parameter set ( content, written by Donal Tobin, was initially posted in Looker Blog on Sep 30, 2020. I spun up a Redshift cluster, loaded with the sample database from AWS.Ĭoncurrency scaling is configured via parameter sets in Workload management. As a frame of reference, I will also show one without concurrency scaling. In the following, I am going to show how a Redshift cluster behaves with concurrency scaling enabled.

(Updated: Now available in five additional Regions: Canada (Central), EU (Frankfurt), Asia Pacific (Sydney), Asia Pacific (Singapore), and Asia Pacific (Seoul) - ) For example, if you use two concurrency scaling clusters, one hour of credit will give you 30 minutes of free burst read four clusters will give you 15 minutes, and so on.Īs of now, Concurrency Scaling Clusters are available only in the following regions: The free credit is divided equally among the concurrency scaling clusters in use. Only when the free credit has run out will you be charged. When the concurrency scaling happens, it digs into the free credit balance first. For example, if you create a new cluster today and leave it running, you will have accumulated 1 hour worth of concurrency scaling credit at the same time tomorrow. Amazon lets you accumulate a full hour of credit for every 24 hours of the cluster running. The cool part is the free concurrency scaling credit Amazon provides. The cost is on a granular per-second basis - the total number of seconds the additional clusters stay online. The process is transparent and completed within seconds. Redshift will spin up just enough additional clusters to handle the burst. additional) clusters can be configured from 1 to 10, and if you need more, you can request it from Amazon. The maximum number of concurrency scaling (i.e. This extra processing power is automatically removed when it is no longer needed, making it ideally placed to handle those burst reads. In a nutshell, you can now configure Redshift so that it automatically adds additional cluster capacity as needed when processing an increase in concurrent read queries. In 2019, Amazon introduced Concurrency Scaling in Redshift. If the burst is solely in read operations, just as the above scenario describes, then there is a much more agile and automated option: concurrency scaling. Critically, this is designed for both read and write operations, scaling up or down. It is essentially switching from one static state of the cluster to another, at set times, out of human speculation.

However, it is still disruptive, speculative and manual. Now, you do have the third option of scaling up and down the cluster through elastic resizing.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed